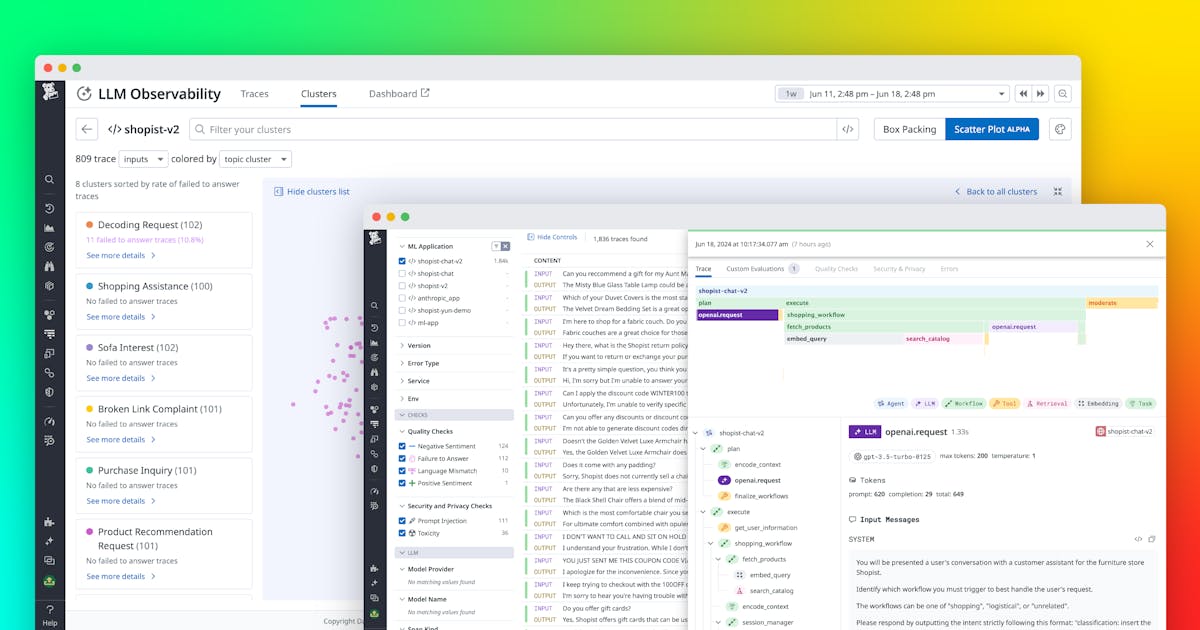

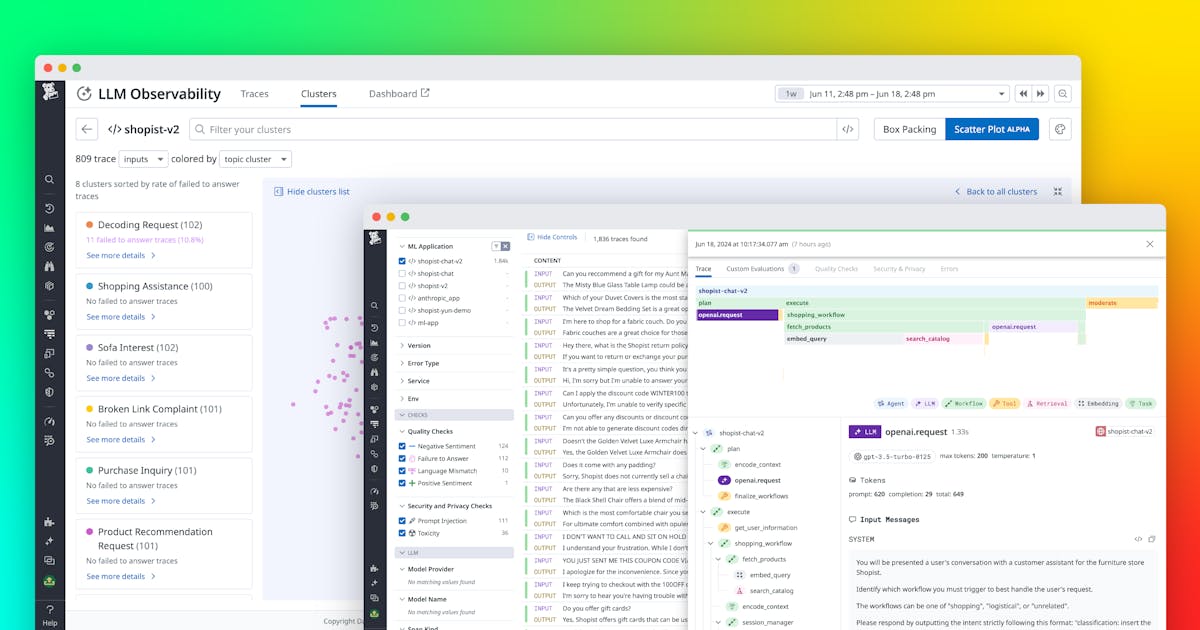

Monitor, troubleshoot, improve, and secure your LLM applications with Datadog LLM Observability

Summary

Read the Original Article

This article originally appeared on Datadog | The Monitor blog.

Read Full Article on Original Site

This article originally appeared on Datadog | The Monitor blog.

Read Full Article on Original Site

Datadog | The Monitor blog • Apr 2, 2026 • 33 views

Datadog | The Monitor blog • Mar 26, 2026 • 29 views

Datadog | The Monitor blog • Mar 26, 2026 • 25 views